Hardware Breakdown Presents: Meta Ray Ban Display

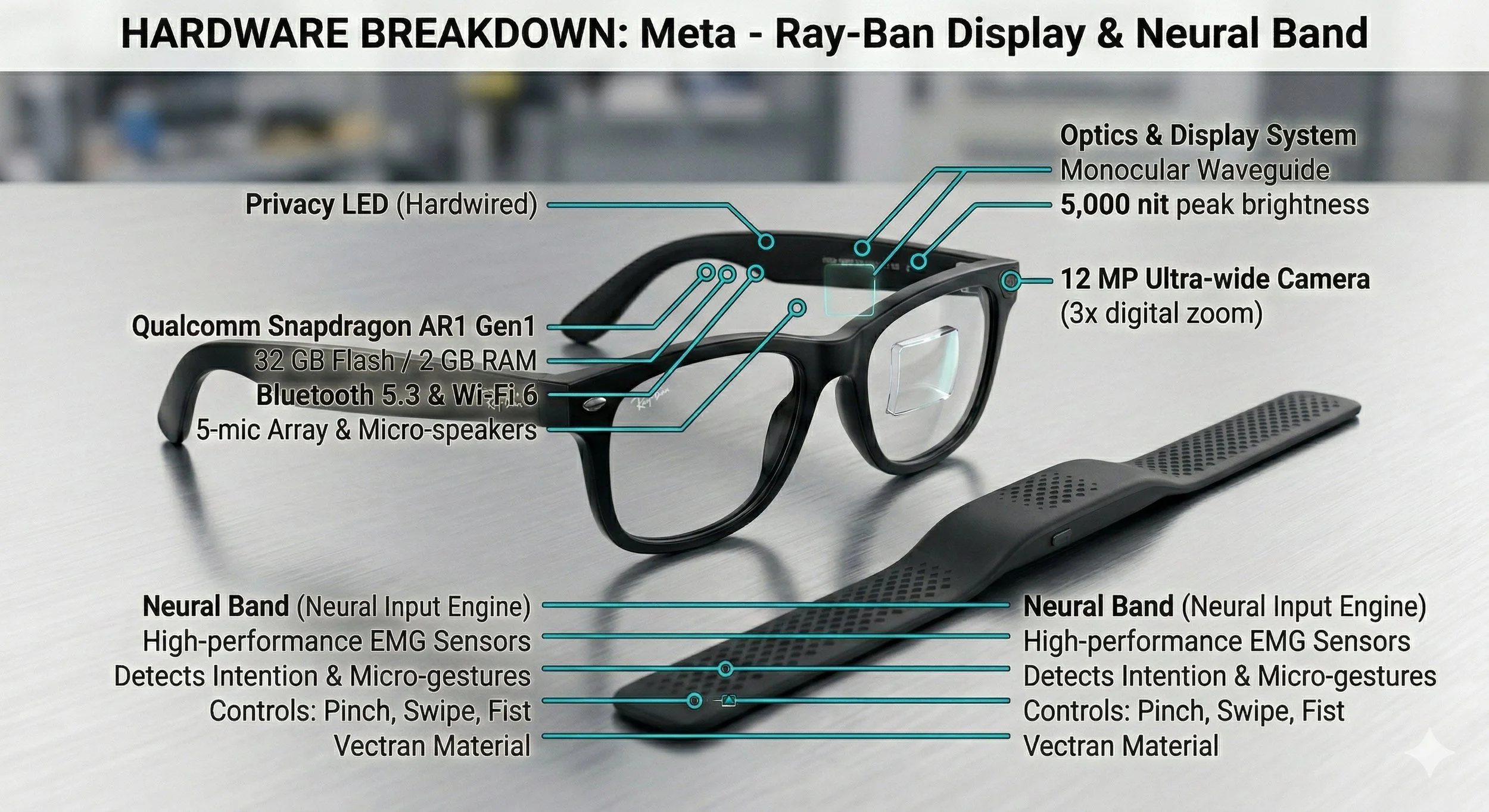

The Meta Ray-Ban Display is Meta’s most ambitious attempt at mainstream wearable computing. Though not a full AR device, it combines advanced optics, miniaturized computing, and neural-input technology into a compact form, creating a hybrid system capable of visual output, environmental awareness, and next-generation EMG-based input via the Neural Band. In this breakdown, I provide a comprehensive examination of Meta Ray-Bans’ core components and features.

The Meta Ray Ban Display and Hardware

For me, the most defining feature of the Ray-Ban Display is its waveguide-based ( also known as Waveguide Optics) technology, which is a first for Meta's smart-glasses product line.

iFixit's teardown reveals what most consider a groundbreaking waveguide stack that enables a bright, compact projection of components embedded inside the Ray-Ban frame. The display itself is static and monocular; this means it projects a fixed-position image directly into the lens rather than the full AR overlay.

According to VR Compare (a specialized database and comparison tool for virtual reality), the display offers a 600x600 resolution with a 20-degree diagonal field of view. This places it in what Meta calls the "glanceable information" category rather than immersive AR.

The Meta's Optical Components

The Meta Ray Ban Optical Components is quite interesting, to say the least. It uses a geometric reflective waveguide system paired with a micro projector based entirely on LCOS (Liquid Crystal on Silicon) technology. These manufactured components are a collaborative effort by the companies Lumus, Schot, OmniVision, and Goertek.

The Neural Ban is The Input Engine

The Neural Band, included with the glasses, is a separate wrist-worn wearable. Its primary function is to replace the need for users to tap the glasses or use voice commands. The sensor technology we mentioned earlier in this post is high-performance EMG, which detects the electrical pulses generated by the user's brain and transmitted to the wrist muscles.

The sensors is capable of detecting users' intention," and micro-gestures ( thumb to index pinch) before the user's finger even moves. The materials for the Neural Band is constructed from Vectran, a liquid-crystal polymer that is also used in Mars Rover landing bags. Making it extremely durable and flexible.

The Computational Specs

Regarding hardware, the Meta Ray-Ban Display features a 12MP camera, a Qualcomm Snapdragon AR1 Gen1 processor, and, surprisingly, 32GB of storage, as well as a 5-mic array with open-ear audio.

The Battery Power

The display and EMG band require constant connectivity and syncing, making power management a dual-device effort. The total runtime for the glasses is six hours of mixed use, including active display notifications and AI queries. The Neural Band also runs on battery power, with a total runtime of 18 hours. The Meta Ray-Ban Display comes packaged with a charging case; it's a redesigned, collapsible leather case that provides an additional 24 hours of charge (8 full hours for the glasses).

My Final Thoughts

In this new installment of Hardware Breakdown, I provided an in-depth look at the sophisticated integration of the Snapdragon AR1 Gen 1 processor, a 12 MP camera, and an immersive five-microphone system into a standard Wayfare frame. It’s safe to say that Meta has successfully transformed smart eyewear from a bulky, poorly designed prototype to a consumer-grade product that has been well-received by tech enthusiasts and consumers alike.